The Heroic Visual-Effects Challenge of Superman Returns

Click here to read the sidebar:

What Shooting With the Genesis Means For VFX Work

Imageworks did the heavy lifting for the man of steel’s digital double, built Metropolis, and helped Superman rescue an airplane early in the film. Framestore CFC created Lex Luthor's crystal island, where the climactic fight takes place. Rhythm & Hues built Superman’s Fortress of Solitude with the Jor-El hologram, and surrounded Luthor’s yacht with a digital ocean for a dramatic sea rescue. Frantic opened the show with shots of Krypton and the red sun and created a sequence showing the birth of Lex Luther’s island continent.

In addition, Photon removed rigs and shot miniatures for the Krypton sequence and a shipwreck. “They had the third-largest number of shots,” Stetson says. Rising Sun sent young Clark Kent leaping over a cornfield. The Pixel Liberation Front did previs on the effects sequences. Eden and Lola handled digital makeup, and Lola took care of some paint-outs as well. And New Deal Studios destroyed Metropolis and sent fire raging through a tunnel.

Stetson, who won an Oscar for The Lord of the Rings: The Fellowship of the Ring, began working on Superman Returns two years ago and spent the first year on location in Sydney, where they filmed Routh flying in green-screen rooms.

“To give the editors and Bryan [Singer] comfort, we shot green-screen elements even though we thought we’d do the shots digitally,” says Stetson. “We had some duplication, but not a lot, and what we shot was always good for reference. Sony had a long development program to show Bryan what could be done digitally.”

Digital Superman

Imageworks used scans of Routh taken before shooting began to begin developing a pipeline for his digital double. To create Routh’s face, the studio returned to a process used for Doc Ock in Spider-Man 2, the Light Stage 2 system developed by Paul Debevec at the USC Institute for Creative Technologies. With this system, cameras positioned around an actor seated in a chair snap photos as a mechanical arm filled with strobe lights rotates around the chair. The photos are stitched together to create sets of image-based rendering (IBR) texture maps that reproduce the face as it’s lit from a particular direction in one moment of time. Imageworks developed algorithms to stitch the photos together, remove highlights, and extract the reflection from the skin. At the end of the process, Imageworks had created 480 sets of reflectance maps – 70 GB of texture data. The studio’s custom software automatically wrapped the resulting reflectance maps around a model of the actor’s head based on CG lighting in a virtual scene.

“It takes several months of work,” says Rich Hoover, visual effects supervisor at Imageworks for Superman Returns. “But it makes it possible to put the proper highlights and key lights on the skin procedurally wherever the key light is in a scene.”

To capture the photographs, Imageworks used six Arriflex film cameras shooting at 60 fps, two more than they had used for Spider-Man 2 in order to cover more angles. They also used a higher frame rate. “The most imprecise issue with the capture is that the actor moves,” says Hoover. The crew synchronized the cameras to each other and to the rapidly changing light sources.

Imageworks developed algorithms that change the reflectance data as the digital Superman's face moves. “Aesthetically, I think our biggest evolution was to take the reflection data through motion,” says John Monos, CG supervisor. “We got pretty close to Doc Ock, but he wore sunglasses most of the time. On Superman we always see his full face.”

To help animators see how the changes they made affected the baked-in lighting, Imageworks’ system department developed methods to accelerate rendering. To replicate Routh’s expressions, the studio motion-captured his extreme poses using a method similar to that used for The Polar Express. “We applied data from the facial-capture session to his facial muscles,” says Andy Jones, animation supervisor. “We simplified the facial system, though, so that animators could control the expressions more easily and faster.” Similarly, animators could reference motion-capture data as well as green-screen footage for his body.

“What I found interesting,” Monos says, “was that when the director didn’t like the green-screen image, several times we were able to replace it with the digital double. This kind of digital human pipe is dependent on acquiring data from actors, but what better way to clone an actor than to take accurate measurements of how the skin reflects light?”

Imageworks flew their Superman through a digital Metropolis, alongside a supersonic jet, out of a flaming tunnel, and straight up into the air for two signature shots in which he soars to a point in space and hovers above the earth. In addition, the studio built the CG Daily Planet headquarters and a 12-block city section that they plopped down in Manhattan after removing distinguishing New York City landmarks such as the Chrysler building and the Statue of Liberty. For this, they used the pipeline developed to create a city for Spider-Man, and even remodeled some of those buildings.

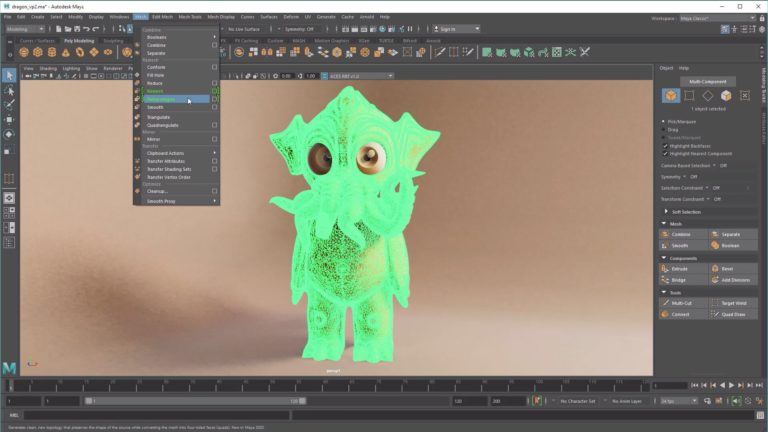

For the shuttle-destruction sequence, Imageworks built the 777, the shuttle, and the baseball stadium into which the airplane nosedives, and populated the stadium with digital people. Of all of this, however, Bruno Vilela, the CG supervisor who led the teams that created smoke, fire, the crowd simulation, and plane destruction, is proudest of the clouds that Superman flies through as he tries to stop the plane and in other shots. To create clouds, they started with models in Maya, then moved the geometry into Houdini, where it turned into thousands of selectively shaded points that further expanded into millions of points with a RenderMan DSO. “The ability to hand-model the clouds was the pinnacle of my strategy for the show,” Vilelo says. “We used them at night, at high noon, and at sunset.”

While those CG environments mimicked the real world, many of the CG environments created an otherworldly crystalline landscape.

Crystal Clear

“Frantic started quite early in the project developing a crystal pipeline, to grow the crystals in a way that would be useful by other vendors throughout the film and to keep consistency through the film,” says Stetson. “Both Rhythm and Framestore used it to various degrees.”

To grow the crystals, Frantic Films worked with the late Richard “Doctor” Bailey, who had developed a renderer called Spor for creating optical effects. “I wanted to use Spor as the basis for Kryptonite,” says Stetson. “Frantic worked with Doc Bailey to figure out how to render Spor’s 2D elements in 3D as well as contain them inside a crystal structure. Spor makes gossamer, abstract effects that in the old days would have been done with distorting lenses.” You can see the result in the birthing of the new Krypton landmass that Frantic created and in a specimen of Kryptonite that Lex Luthor holds.

Near the beginning of the film, Luthor invades Superman’s Fortress of Solitude, a crystal world grown in the Arctic. Kevin Spacey (Lex Luthor) and Parker Posey (Kitty) acted on a set. Rhythm & Hues matched the set digitally and extended it. “We used a bit of Doc Bailey’s Spor renderer that creates fractal images, for lack of a better word, for some areas, and built crystals that we rendered with WREN, our own renderer,” says Derek Spears, visual effects supervisor at Rhythm & Hues.

Marlon Brando, who played Superman’s father, Jor-El, in the first film, makes a holographic appearance during this sequence. “The technique was really an extension of what we do with talking animals,” says Spears. “We built a model, projected texture on it, and reanimated his mouth. I think it’s well done, but our water was much more of a breakthrough.”

The CG water became necessary for a sea rescue sequence during which Luthor destroys his yacht, the Gertrude, with Lois Lane and her son onboard. “Rhythm & Hues did an incredible job of building the digital version of the yacht and sinking it,” says Stetson. “The ship was built as a series of sets, but not as a complete exterior, and the sequence changed at the last minute, so we couldn’t use the miniatures for much of the destruction. R&H did the water, the big storm effects, and clever and comprehensive interactions between the objects and water.”

Jerry Tessendorf, chief scientist at Rhythm & Hues, well known for his research papers on water simulation, created the studio’s Iwave tool that generates the seascapes. To help direct the simulation, R&H also developed a field description language dubbed Felt. With Felt, they could scale the detail in splashes to add complexity as needed.

Spears explains that when virtual water flows through a box, for example, the direction it flows can be represented by vectors. A field is a collection of vectors – that is, a collection of directions. Thus, Felt can describe the way a splash moves as a set of directions. Rhythm & Hues could use waves as a source for Felt or manipulate field data to create waves. “We could take the point where the velocity crests as an impact point to generate a wave,” says Spears. “Felt describes how the interactions work. It’s a higher-level tool than the fluid simulation. A fluid simulator could be written in Felt. We could control the splashes at a high level and then render them with whatever detail we wanted so we weren’t bogged down with calculating details in the simulation.”

During the sea rescue, for example, a crystal impales the boat, creating large splashes. The studio simulated all the waves in Felt using the Iwave tools, and Houdini’s volumetric renderer, VMantra, rendered the water. Practical foam elements composited onto the water surface with the studio’s Icey compositing software added texture.

“I think this was technically more challenging than any water we’ve done before,” Spears says.

Killer Kryptonite

For the showdown between Superman and Lex Luther on Luther’s crystal island, Framestore CFC created 313 shots with CG environments and CG water that occupy around 20 minutes of the film. During the sequence, Superman falls off a cliff and is rescued by Lois in a seaplane. Later, Superman flies back, lifts the entire island out of the ocean, and tosses it into outer space.

“There was only one partial set for the area where they have their fight,” says Jon Thum, visual effects supervisor. “Everything else is digital. A seaplane lands in CG water, takes off in CG water, and flies over a CG waterfall.”

The fight scenes were shot against green screen when Superman was on the ground, but the sequence also includes stunt-quality digital Superman elements created by Framestore CFC. For close-ups, Framestore collaborated with Imageworks. “When we needed close-up or high-res Superman elements, Sony would render them,” says Stetson. “Generally, we wanted Sony to light Superman properly for the scene.”

The crystals from Frantic Films helped with close-up shots in which the animated effect could be seen inside the crystals, but for the most part the environments were part Kryptonite and part rock.

“We used a Lego building-block approach to build the island,” says Thum. “We made some crystal spikes [and] stuck them together into a nice composition, and if it worked we reused it in other shots.” For wide shots, the team used a large model. For other shots, they cut and pasted compositions of little spikes.

“We used Maya to block out shots and to do simple rigid-body stuff like the crystal rock, the helicopter and the seaplane,” Thum says. “[We used] Houdini for rendering the oceans and any kind of dynamic particle effects; RenderMan for rendering.” Rhythm & Hues supplied helicopter and plane models, while Framestore CFC built the island and the environment.

In one big sequence, as Luthor tries to escape the island, a huge column of rock falls down and smashes everything in its path. “We pushed Houdini dynamics as far as it would go,” says Thum. “Traditionally, when you have a simulation you press go and it just happens. But the frustrating thing is that you want to keep some, throw some away, and hold some bits before they move. Side Effects helped us push the choreography farther than it normally would go.”

Thum’s crew used Houdini, working with a Gerstner mathematical model for surface waves to create the water. Particles added spray and then procedural textures and practical elements added foam, even around rocks in the sea. “Mark [Stetson] shot a lot of foam elements by pointing a camera down on the water near Sydney,” Thum says. “And we developed ways to place the real foam onto specific areas of the 3D surface.”

Houdini also helped Superman wrench the island out of the water. “We had enormous shots of Superman lifting up the island, with rocks falling into the sea and Kryptonite forcing its way through the rocks,” says Thum. “All that was done with setups in Houdini so we could break up rocks and push them out of the way and then mix that stuff with particle water and dust.”

In addition, a 2 ½ D matte-painting department added detail when the procedural textures weren’t sufficient by painting on the geometry. “We’d render a scene in Maya, take it into Photoshop, paint on the frame, re-project it back, and render it out again in Maya,” says Thum. “It enables you to have the best of both worlds. You can light stuff and paint the areas lacking detail. The only limitation is there aren’t many people in the world who are good at doing that kind of thing. We need people who are very good TDs and matte painters.”

Having Fun

One of The Orphanage visual effects supervisor Jonathan Rothbart’s favorite shots is not an obvious VFX shot. Superman has disappeared from his hospital bed – flown out the window. As the camera looks out the window and down the city street, the shot fades into Lois’ study. The Orphanage pieced together footage from five cameras to create the shot: a Steadicam following a woman through the room; a shot taken a year earlier with a Super Technocrane that moved down a city street in Sydney; a motion-control setup in the room that linked two cameras, one over the bed and another looking out the window; and a camera in Lois’s study.

“A lot of that is CG, but no one would know,” says Rothbart. They removed rigging and lighting from the room, created the window and the blinds, and recreated the city outside the window. Although they had a plate shot in Sydney, they changed it to match Metropolis and to blend into the shot of Lois’s study. Stories in the city buildings lined up with bookshelves on either side of the study, and a reflection matched a light over Lois’ head. “It’s a mellow and elegant shot with a lot going on that everyone assumes was photographed,” says Rothbart.

The Orphanage also added Metropolis touches to the Kitty car chase shot in Sydney. “We retooled the plate for some shots,” says Rothbart. “Others were completely digital. Some were projections on geometry, others were painted geometry.” They created the city in 3ds max, but for the bank job, used Houdini for effects such as sparks, bullets and tracers. Their digital stunt double of Superman was animated in Maya and rendered in SplutterFish Brazil and Mental Ray.

During a bank job sequence, a robber dares to pull a pistol on the man of steel. In a dramatic slow-motion shot, a bullet smashes into the center of Superman’s bright blue eye and the crunched slug falls to the ground leaving Superman, of course, unfazed. “It wasn’t a motion-control shot," explains Rothbart. “It was shot on the Genesis. We had to recreate the scene using images from the plate and relight Superman based on the timing of the muzzle flash.” Although Routh was filmed with light flashes to imply the muzzle flash, once the shot was edited, the muzzle flash needed to happen a second and a half later.

To relight him digitally, the artists first removed the lights on his face, then re-painted him in Photoshop and projected the new painting in After Effects. “We replaced his eyes digitally so we could match not only the reflection in the eye, but also have the pupil and muscle twitch,” says Rothbart. “We had a projection map for every frame with a unique painting.”

To help Superman bounce bullets off his chest, the studio used matchimation to animate a digital Superman that had the same movement as the live-action actor. “Once they’re locked in step, we do our effects work and interactive lighting on a gray version of Superman.” Compositors used the intensity values to add colored lights, glows and effects to the final shot. “Because we’re using the costume and cape from the plate, we didn’t have to match reflective textures,” Rothbart says. “It’s a cheat, a sidestep so we don’t have to fully render him. It’s photoreal because we’re using the photoreal plate.”

That fits with Stetson’s goal.

“Everyone knows that these are not real events,” Stetson says. “But they’re still couched in real-world environments. We worked really hard to keep it all plausible. Superman in the past has flown faster than light, gone back and forward in time, and shifted universes around. It’s not wise to limit Superman’s strength or power, so we didn’t try to do that. But we did try to characterize it and keep it consistent. I hope people like what we did. We put our hearts into it.”

Did you enjoy this article? Sign up to receive the StudioDaily Fix eletter containing the latest stories, including news, videos, interviews, reviews and more.