Image Engine on Creating Believable CG Aliens for the Summer Sci-Fi Hit

One of the year's major success stories was the release of District 9, a science-fiction film set in South Africa that worked as an unconventional buddy film, a gritty action movie, and an imaginative parable of Apartheid and xenophobia. In the film, produced by Peter Jackon's Wingnut Films, extra-terrestrial visitors have been segregated to a section of Johannesburg known as "District 9", where they live in squalid conditions as their mysteriously disabled mothership hovers overhead. The story follows an officious bureaucrat, Wikus, who finds himself in an unlikely partnership with an alien named Christopher Johnson after he inhales a mysterious substance that starts to transform him into an insect-like alien body. If Wikus can help Christopher and his child, an alien pipsqueak known as Little CJ, get their mothership up and running, they can make him human again before they blast off for their home planet. All of this happens on a reported $30 million budget. Vancouver's Image Engine grew to a crew size of 110 and completed 311 visual-effects shots for the film, with the responsibility of animating three main characters – aliens based on conceptual designs from Weta Workshop – as well as the larger numbers of aliens populating the film. So how did a largely unheralded Vancouver VFX facility land the job of creating amazing CG aliens for one of the coolest movies of the summer? He might not have known exactly how it would turn out, but that's the kind of project VFX Executive Producer Shawn Walsh had in mind when he started working at Image Engine in 2006. "There wasn't really a film presence at the company," he tells Film & Video. "My job was to create that."

Over the next couple of years, Walsh says, he helped build the company from a 20-person firm specializing in HD television work to a feature-film VFX house that could staff up to more than 100 people. High profile projects included Mr. Magorium's Magic Emporium, The Incredible Hulk, and this year's Orphan, and that kind of growth caught the attention of District 9's director, Neill Blomkamp, who had his own background as a Vancouver VFX artist working for The Embassy and Rainmaker Visual Effects. When Weta, swamped with work for James Cameron's upcoming Avatar, was unable to do the digital creature work for District 9, Blomkamp started to shop the project around.

"Ultimately I think it was a combination of our pitch to Neill after he contacted us, with a dialogue back and forth ' and some of the financing things I helped him with in a more pure exec-producer capacity ' that ended up landing the work in Vancouver," Walsh recalls. "And certainly Neill was a huge advocate of doing post and VFX in Vancouver." The company wasn't built using solely Vancouver talent, however ' Walsh estimates that a third of the staff is native to Vancouver.

The bidding process for District 9 happened from January to March 2008, with Image Engine winning the project in April, Walsh says. Asset-building began in May, and work began in earnest in September, as Image Engine worked full-bore to make assets and tools before plates started arriving at the beginning of October, according to VFX Supervisor Dan Kaufman. Work continued through June, 2009.

Image Engine had already made significant investments in its pipeline — including an R&D department tasked with developing custom tools and augmentations to existing tools — so the District 9 job required only "incremental" new expenditures, Walsh says. "Ingesting large amounts of motion-capture data was not something we had done before, but we had people with the experience who would be able to do that," he says. "We hadn't painted out an actor in a grey suit to the extent that we were going to be doing it on District 9, so we wrote custom tools to make that process easier."

That was Walsh's purview on District 9: establishing the parameters for the project in terms of how the facility would approach VFX problems, and marrying those issues to finances. Coming at the problems from a different angle was Kaufman, whose big-picture experience before he signed on for District 9 included such VFX-heavy movies as The Core and Poseidon as well as invisible-FX projects like Ocean's Thirteen and Leatherheads.

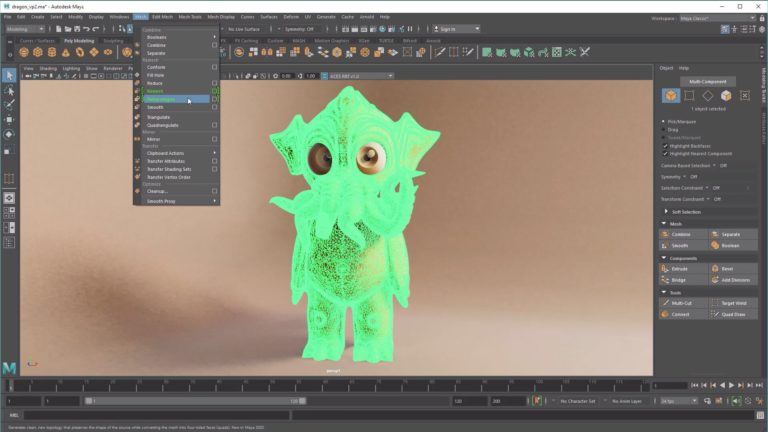

Arriving at Image Engine, the first task facing Kaufman was a survey of the landscape. "I had to see where everything was at, trying to get as much information as I could from all the different people working at Image Engine about where things stood," Kaufman says. "They had started work on an asset-management tool called Jabuka for actually allowing animators and artists to use different assets that we were building. Nigel Denton-Howes was the asset lead and developed that almost entirely himself. It was a very comprehensive tool that could bring in assets and use them in shots, with a GUI for picking different shots and attaching assets to other things. And there were other tools in the works when I got there to aid the animators in dealing with specific things and to add usability to Maya."

The aggressive approach to developing custom tools was important because there wasn't going to be much room for trial and error as the project was executed. "Honestly, the script was changing all the time. A lot of it was sort of ad libbed, and it was hard to tell what was going to be in the film and what wasn't," Kaufman explains. "So we knew the environment we were going to be in and the sort of things we'd have to do, and we had to plan to be able to handle whatever came at us. The overall complexity of shots grew quite a bit, so if we hadn't had that kind of pipeline, we would have been in a very bad place."

The toolkit for District 9 consisted of a number of common off-the-shelf programs, augmented with custom-built bits of software that extended their functionality. Autodesk Maya was the 3D workhorse, with the Foundry's Nuke doing compositing duty. Next Limit's RealFlow was called on for some fluids and dynamics simulations. Rendering was handled through the Renderman-compatible 3Delight from DNA Research, which Kaufman said did "a fantastic job."

Motion-Capture, Rotomation, and Keyframing

Part of the early planning involved figuring out what mix of techniques would best address the variety of shots in the film involving aliens. "One of the things we assumed correctly early on," says Walsh, "was that we would have to use a liberal blend of motion-capture, rotomation, and keyframing to accomplish the animation, depending on what kind of shots the aliens existed in. Composition would be key to that decision."

Animation supervisor Steve Nichols breaks down the thought process further. "We used motion-capture where we could, especially for background characters," he explains. "If there was any interaction with the lead character, they had an actor on the set, and we would use rotomation. Jason Cope, one of the actors in the movie, became our Christopher. He would walk around in a very revealing skin-tight grey outfit with some tracking markers on it. Sometimes he was on stilts, as well, to get the eyeline right. He would mock up a general performance so we could get some nice interaction and contact with the characters. The animators would go in and capture that basic movement and make it more alien."

"And the third thing we had was straightahead keyframe animation, especially on Little CJ," Nichols continues. "We did shoot reference a lot, but a lot of the time we just keyframed the shots."

The production ended up going with all-CG aliens, rather than trying to execute a half-prosthetic hybrid. "They did have a costume for an actor to wear, but that didn't work out," Kaufman recalls. "Neill had a pretty clear idea in his head of what he was looking for – they should be humanoid with an insect influence. They should be brightly colored, which is tough because it looks real on an insect, but when you scale it up it's hard to make it look real again."

"The whole process evolved as Neill watched the takes coming in," Nichols says. "He started steering away from motion-capture because he wanted them to move differently ' to be twitchy and edgy, with razor-sharp movements that a human couldn't do. Because they're skeletal in their frame, they look light a lot of the time, so we had to do quite a bit of massaging to make sure they felt heavier and had some weight. A majority of the work on the movie was the rotomation overlaying the performance."

Once the animation techniques were established, there was the question of developing a style that would suit the film's mood and atmosphere, which was largely determined by the film's gritty handheld footage. "It took a while to get used to that – how much you push the animation to have it show up when the camera's moving around a lot, yet still feel realistic," Nichols says. "And I've never worked on a character who's had the crap beaten out of him so much. Having him devolve through the movie – limping more and becoming slower – and changing the model so that he has more cracks on him was very new."

Getting Emotion From an Alien

Getting the right performance out of all-CG alien characters was a special challenge, too. "It's an understated style of performance," he notes. They all have a tentacle moustache that rides on their mouth, so you rely on their eyes. They're like grasshopper eyes – how do you make someone feel sorry for a grasshopper? So a lot of that was Neill. Any time we did something that was hammy, he'd immediately slap our wrists and say,'That's too Pixar-ish.'"

The film's emphasis on realism had implications for the creature design, too. "It's a matter of [the aliens] looking realistic in a shot that could be lit in the daylight or in dark interiors and exteriors. If the textures hold up and the specular holds up and it looks real in the environments, my job is done," says James Stewart, creature supervisor. "But there's no forgiveness there. It's not like they did a DI at the end of the process and made everything blue. They didn't hide anything. What we rendered is what you saw. So our tolerance level from look-dev had to be real, or it wouldn't be real in the shot. It was so revealing, and the sun was so hot, that we had to make every detail count."

And that relentlessly restless camerawork made tracking a formidable task. "The tracking was most definitely challenging, but quite manageable given the talent of our tracking team and the sophisticated software available," Kaufman says, noting that the team, led by Derek Stevenson, relied on Pixel Farm PFTrack, 2d3 Boujou, and Science.D.Visions 3D-Equalizer. "Sometimes we had to take out the actor who was acting out the main alien parts. Tracking him out and tracking in the fixed-up background that didn't have him in it was the hardest part."

The 1,000,000-Alien Shot

One shot from the film posed an extra challenge. One brief shot shows the interior of the alien mothership, which is full of hundreds of the creatures, whose bodies can be seen stretching back into the darkness. It's seen near the beginning of the film, but it showed up at Image Engine very late in the production. "They originally called it the '100-alien shot,' but it gradually evolved. When Neill started talking about how many aliens he really wanted, it became the '1,000,000-alien shot'," says Nichols. "We were at a stage in the pipeline where we had built all of our assets and created everything we had to create, so this had to work within what we had already built.

"I had a really strong tech animator, Jeremy Mesana, who developed a system so that we could lay out hundreds of aliens in this room. We had to develop light enough rigs that they could take a bunch of different animations and populate out this huge space. We did that with 3D geometry for the foreground, and then everything else was [2D] cards in the background. The cool thing about that shot is it was the ultimate collaboration shot. My whole team of animators finished their other work, and everybody worked on that one shot together."

"That was hard mostly because we didn't have a lot of time to do it, so we had to scramble to figure out a way to get all the aliens together in that shot and make it work," says Kaufman. "Nuke has a nice integration of 3D and 2D together in one package, so we could sort of render passes on cards and stack them up in 3D space in Nuke.

"Neill sent me an email after the movie was over saying, 'Oh, by the way, I ran that shot through a VHS player. It works really well in the movie,'" Kaufman recalls. "I emailed him back saying, 'Well, whatever works – but I won't tell the compositor that!'"

In the end, Walsh says one of the most striking things about doing VFX for District 9 was the apparent ordinariness of most of the shots. "They're so banal, with a reality TV-era appeal, and you completely forget that there's an alien staring at you in that shot," he says. "This is a story about aliens invading Johannesburg! There are a lot of incongruities in the film that are really appealing. A lot of summer movies are quite derivative, and people still enjoy them even though they know what they're getting. District 9 was a real surprise to people going into the theater cold, who didn't know what to expect."

"It's always great working on a movie that you know is something different and original, and those opportunities don't come around often enough," Kaufman says. "Couple that with aliens, spaceships, and ray guns – awesome! And it's also really cool working with a director like Neill who has this whole alternate world in his head and can guide you through it. You're not just trying random things and hoping something sticks. It's all there – it's just a matter of getting that world onto the screen."

Did you enjoy this article? Sign up to receive the StudioDaily Fix eletter containing the latest stories, including news, videos, interviews, reviews and more.