Blades of Glory's 3D Face-Switching on a Budget

Click the link below to see a Flash presentation (broadband recommended) featuring Rainmaker's work on the film, including videos and before-and-after slides.

That wit and ingenuity was due, in part, to a lucky break. “Jon Heder cracked an ankle bone during practice, so we had a three-month hiatus while he healed,” says Breakspear. “That gave us an opportunity to previs every shot in the skating sequences, and we came up with new moves.”

To make the moves work, Rainmaker used new, state of the art techniques to replace the four stunt skaters’ faces with the actors’ faces. The techniques rely on a blend of commercial software and proprietary tools. “People have done fantastic face replacement in CG on mega-movies, but we didn’t have four years or a blockbuster budget,” Breakspear says. At first, the studio pushed for a 2D solution, but Breakspear convinced the directors that switching faces in 2D would limit their ability to shoot the skaters and restrict the skaters’ movements.

Fortunately, Rainmaker had a gold-medal face-replacement expert on its team, CG supervisor Kody Sabourin, who had worked on the legendary “Super Punch” shot in The Matrix: Revolutions. In that shot, accomplished with proprietary technology, Agent Smith’s face deforms in an extreme close-up when Neo lands a punch.

“Given my experience on the Matrix films and from working at ESC with people like George Borshukov and Kim Libreri, I knew the best way to approach this project was with animated textures that we captured at the same time we captured the performance,” he says.

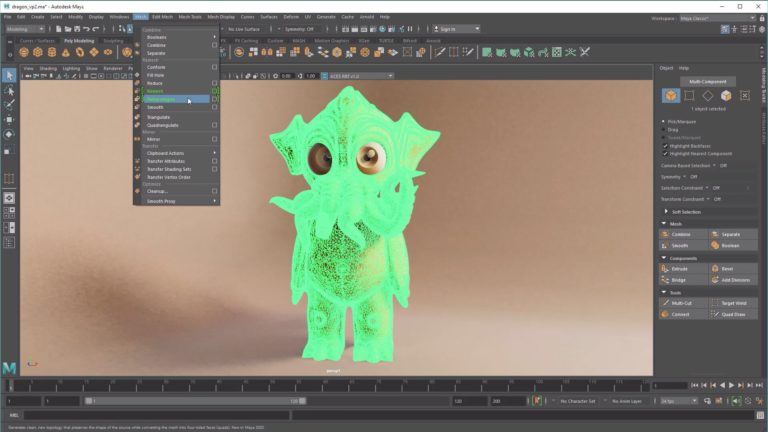

They began by taking digital photographs and making plaster casts of the actors’ faces. Then, they had the casts scanned in high resolution at XYZ RGB in Ottawa. Using the photographs and a 3D model created from the scan data in Autodesk’s Maya, visual effects artists determined where to place 102 tracking markers on each face. Before the performance capture session, makeup artists copied each marker’s position onto each actor’s face.

To capture the actors’ facial performance and animated textures simultaneously, Rainmaker devised a clever set up using three HD cameras, one film camera, and mirrors. “Imagine that the actor is sitting in a chair and around him are four cameras about two meters away,” says Breakspear. “Above and behind him are mirrors.”

The team captured the tracking markers with the three HD cameras, and recorded textures during the actors’ performances with the film camera, which they positioned in the center. Breakspear put lights behind silks to capture the faces with completely flat lighting. “Using the film camera was something that has never been done before,” Sabourin says. “Not even on the Matrix films. We could scan the film and have textures at 4K resolution if we needed, whereas with HD, we were capped at HD resolution.”

The actors performed to footage of his or her stunt double. “We had split screens on set so the actors could watch the stunt doubles in the plate and then watch themselves reacting,” says Sabourin. “The director had free reign.”

The mirrors doubled the data collection and provided textures from multiple angles. “With mirrors, rather than using more cameras, we could split one image into three,” says Sabourin. “We got textures from the front and both sides of the face, and an extra three cameras tracking the performance.” To match the frames once the cameras rolled, a time code generator linked all the cameras.

The effects team tracked the markers using RealViz’s MatchMover Pro and triangulated the actors’ facial motion into 3D space. “We ended up with 102 dots that looked somewhat like the actors’ faces moving in 3D space,” Sabourin says. “That is, you could make out many of the moving shapes, like their ears, throat, lips, eyes, and nose.”

Next, they applied the animation from the dots to animatable meshes created from the high resolution XYZ RGB scans of the actors’ facial casts. Proprietary code helped them transpose as much detail from the actor’s performance as possible. “That gave us an animated face mesh,” Sabourin says.

Weight maps helped make that movement believable. “We painted weight maps ‘ grayscale texture maps ‘ to define range of movement,” says Breakspear. The weight maps controlled how much influence each dot had over the geometry. “The skin on the nose doesn’t move far, so the weight map would be tight there,” explains Breakspear. “But a weight map for the cheek would be squashy.” Thus, if an anomalous dot tried to stray from reality, the weight maps pinned it in place.

The result gave them fully CG versions of the actors’ faces performing as they did on set. “It was a breakthrough,” says Breakspear of the team’s solution. “A eureka moment.”

Then, especially for close-up shots, they extracted high-resolution displacement maps from the XYZ RGB scans to add such details as scars and pores. “That, combined with the animated texture maps, gave us wrinkles,” says Sabourin. “Because we had 150 face replacements with unique performances to do, we gave the faces that were far away only the bare minimum, but a lot of the shots were close-ups.”

To match the lighting on the digital face to lights used when filming the skating doubles, Breakspear relied on HDRI. “For every shot of the doubles, we had shots of a gray ball, a chrome ball, and images taken with a fish eye camera,” he says. Even so, the lighting team faced unique challenges. “The ice created a big bouncing light environment. Our lighting team did a fantastic job making the digital faces look right.” For rendering, Rainmaker used Mental Ray.

“Creating full CG stadium replacements with CG crowds was roughly half our work,” says Breakspear. Although the studio had devised a method to create crowds in 2D for the film She’s the Man [

During the live-action shoot, 800 people and 5000 inflatable stuffies sat in the stands. “The stuffies worked well for a blurring background,” says Breakspear. “They look like real people. But in some shots we needed to have the people moving and clapping.”

For those shots, Rainmaker either replaced everything in the L.A. arena from the second level of seats up or created fully 3D stadiums by building digital sets in Maya. For the crowds, the studio used Massive software, building brains that signaled the fans to clap, cheer, stand up, sit down, walk through the aisles, and even order hot dogs. The CG characters moved using animation cycles created from motion data captured at the studio’s new division, Mainframe Entertainment, which Rainmaker acquired during 2006.

Creating the Iron Lotus moves for the film involved face replacements, rig removals, CG crowds, CG stadiums, and green-screen composites. “Everything came together,” says Breakspear. “It was so dramatic. John Heder grabs Will’s leg and throws him in the air. Will spins in the air backwards. Then, John puts one leg in the air, does his own spin and jumps into the air … and … it’s so dramatic.”

That people walk out of the theater laughing, not wondering how the actors could possibly have performed their skating moves is a tribute to the VFX moves performed at Rainmaker. “Without our new face-replacement technology, we couldn’t have done it,” says Breakspear.

Did you enjoy this article? Sign up to receive the StudioDaily Fix eletter containing the latest stories, including news, videos, interviews, reviews and more.

Leave a Reply