A CG character is a tall order for television, no matter what the context is. Sure, Game of Thrones has a budget for dragons and White Walkers. Still, it’s safe to say that 3D animation was one of the last things Starz had on its mind when it began airing Survivor’s Remorse, the LeBron James-produced series about pro basketball player Cam Calloway (Jessie T. Usher), back in 2014.

But there it was, in last season’s penultimate episode, “Second Thoughts” — a little CG fetus engaging Cam (who has inadvertently ingested a bit of LSD) in a psychologically fraught conversation about a high-school girlfriend’s abortion and what might have become of his unborn son if a different choice had been made.

Not your typical TV fare, even on premium cable! But it turns out the idea had been percolating for a while in the head of showrunner Mike O’Malley. Because he had been imagining it for so long, O’Malley would need to guide the CG performance, with the ability to revise the animation over multiple takes up until the moment the episode was finally locked. Where 3D animation is concerned, that’s a recipe for spending a lot of money. It fell on visual effects supervisor Rick Redick to make it happen affordably.

“Doing 3D animation is hugely labor-intensive,” Redick says. “The short version is, we tried a lot of stuff.”

A Long-Gestating Idea

Redick had worked with O’Malley on Shameless before they reunited on Survivor’s Remorse, so they already had a good working relationship. And O’Malley had faith that Redick could make the scene happen.

“He was a writer-producer on Shameless and he called me up one day and said, ‘Could you animate a fetus?'” Redick recalls. That scene never made it into a Shameless script. But three seasons into his own show, O’Malley returned to the idea.

(As executed, the scene isn’t as unnerving as you might imagine. In an interview with The Hollywood Reporter, O’Malley explained that the fetus was named Figgy “because it’s a figment of what Cam thinks a 20-week-old fetus would look like, not what a 20-week-old fetus would look like.”)

When the scene finally showed up in draft form, Redick remembers starting to panic. It wasn’t written as an animated fetus delivering a couple of lines to camera. It was drawn out to a full-blown monologue that would chew up four or five minutes of screen time. And Redick knew O’Malley would want to specify very specific movements and gestures to help make the scene work, and the process of making iterative changes to the 3D work could derail it entirely. Redick’s first thought was let’s get this in camera.

“I originally thought we would shoot a practical effect,” he says. “We went to the place that makes all the dummies for The Walking Dead and paid them $1,000 to rent this little baby doll for a week. We hired a puppeteer and we shot that little dude, thinking this puppet was going to work as our baby.”

It didn’t fly.

“They really wanted a 3D thing,” Redick says. “Mike had just seen The Jungle Book, so he said, ‘I want it to look like The Jungle Book!’ Not having a ton of money, needing it to look good, and needing it to be totally tweakable — it seemed impossible with the resources we had.”

Redick had cleared out his own box of tricks, so he started asking around to see if anyone else in town had any ideas. That’s when a mutual colleague referred him to Mobacap founder and CEO James Martin, a previs and motion-capture specialist who was doing interesting work in TV, film and videogames, with jobs in the medical and educational markets on the side. To Redick’s astonishment and relief, Martin listened to the pitch and responded, “Yeah, man, I can totally do this.”

Moving to Real-Time Animation Software

The backbone of Martin’s technique was Reallusion’s iClone real-time 3D animation software. The existence of iClone wasn’t exactly a secret in the industry, but it wasn’t considered a capable tool for broadcast content. “The way we used it for this particular project, as far as I know, had not been done for any broadcast television up to this point,” Martin says.

The key to success was being able to support multiple iterations, allowing O’Malley to refine the scene as the show got closer to completion. In order to support both the complexity of facial animation and the need to precisely recreate a gestural performance, the job was broken into two parts — the head and the body.

“We had the head built in a traditional 3D-animation sort of way, and the foundation of the body was [created by] James,” Redick explains. “I shot Mike [O’Malley] doing all the gestures, then sent the footage to James, who watched it and mimicked it with his mocap rig on. That was the way we needed it to happen.”

“The gestures were important,” says Martin. “We’re talking about things as intricate as flipping the bird. We needed to be very expressive in the body language. Mike has this attitude in the way he performs, and the fetus was supposed to have that same sort of demeanor. Getting that in the facial performance is one half of the battle, but getting it from the neck down is another monster.”

Reallusion Character Creator was used to lock down the character concept and design for the rig. To generate the actual performance data, Martin used a Perception Neuron inertia-based motion-capture system from Noitom, which he says has a technology partnership with Reallusion allowing mocap data to stream into a character rig in iClone in real time. “The speed of real-time animation plus inertia-based mocap rigs really allowed me to say yes to a two-week deadline,” Martin says. “I literally slept beside my machine for a few days with a Flame editor and we babysat renders to get it through. But we hit deadline, and we had a pretty streamlined process.”

Last-Minute Changes

Was Redick able to keep O’Malley’s ideas for revision in check, given the complexity of the scene? Not a chance. “I went over to the production office [near the end of the process] and Mike had all these new ideas,” Redick remembers. “I almost started crying. ‘Dude, we’re done!’ But instead, I set up a camera in a conference room and we had him go through the whole scene again, and James redid the whole thing, altering the animation to accommodate those last-minute changes.

“[But] we had to hand-animate the face and the mouth in a traditional 3D world, which is such a tedious, labor-intensive process. The real-time mocap is insanely great, and I’m sure there will be a day when the software catches up to James and you could use it for the head and face, too.”

As a power user of Reallusion software, Martin knows a thing or two about what’s in development, and he says a new partnership with facial-capture specialist Faceware Technologies is in the cards for version 7, along with improved lookdev tools including physically based rendering and global illumination as well as the ability to import and export cameras.

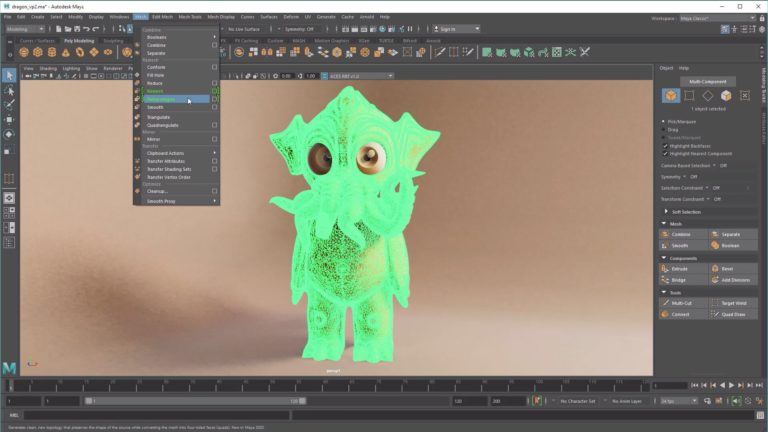

“They’re an extremely innovative company that has been able to find a space in traditional pipelines by working with traditional 3D teams and studios without disrupting their workflow,” Martin said. “They’re providing output, whether that is in a 2D or 3D format, that is compatible with standard, industry-level tools. If somebody is working in Avid Media Composer or Autodesk Maya, you can provide them with a Targa sequence or a full 3D model and scene through Alembic. It’s a really versatile 3D production engine.”

Redick does caution that the output needs special attention to pass muster in broadcast-quality footage — a small price to pay for a 3D animation workflow that runs at life-saving speed. “There are limitations in terms of textures and surfaces,” he says. “You get the foundation of the animation, and then it needs some love on top of that foundation in order for it to look great on a 30-foot screen. But it’s a perfect blend of high-tech and lo-fi. It’s down and dirty enough that you can turn things around overnight, and then it takes a little love to make it look great.”

Crafts: Broadcasting VFX/Animation

Sections: Creativity

Topics: character creator faceware technologies iclone mobacap performance capture reallusion

Did you enjoy this article? Sign up to receive the StudioDaily Fix eletter containing the latest stories, including news, videos, interviews, reviews and more.

Leave a Reply