How Image Engine's VFX Process Kept Up with Tarsem's Visual Imagination

The film’s VFX supervisor, Raymond Gieringer, organized the work of a raft of facilities in North America, Europe, and India: Image Engine, Prime Focus Film VFX, Mikros Image, Tippett Studios, Scanline VFX, Rodeo FX, BarXSeven, Fake Digital Entertainment, Modus FX, Christov Effects and Design, and Technicolor, and previs houses The Third Floor and NeoReel. The task for Vancouver’s Image Engine was to create the vast cliff-face environment linking three of the film’s major settings.

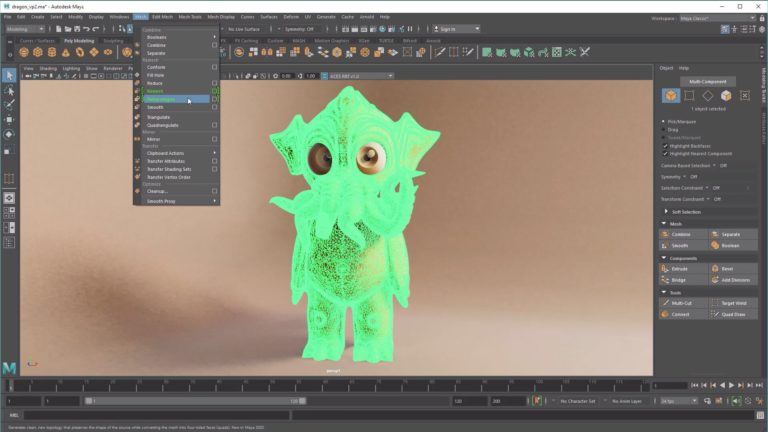

The virtual environment had to be detailed in all the right places, so that it would stand up to camera scrutiny in shots where it took up the majority of the frame, simple enough on the whole to allow quick fixes during production, and massive enough to make sense as the camera pulls back to a literal god’s-eye view of earthly affairs. The CG build was done in Autodesk Maya and Pixologic Zbrush; matte paintings were executed mainly in Photoshop, with a little bit of Maxon BodyPaint 3D in the mix; and compositing happened in Nuke. Simon Hughes, Image Engine’s VFX supervisor on the show, leveraged experience he had gained working on Inkheart at Rainmaker in London to manage the task. “I had a good taste for how long a process this can turn out to be,” he says. Hughes filled us in on the process for Immortals, Image Engine’s largest environment build to date.

Mouseover film still above to compare to original live-action plate

SD: What were the first steps in the process?

SH: First, I went on set with Raymond [Gieringer, overall VFX Supervisor] to partially supervise the work. The three sets were the checkpoint, the tree bluff, and the village, all shot on green screen in a studio in Montreal. The production had a Mo-Sys system, so they did a basic build of the cliff as a Maya scene with very simplified geometry, just to help us visualize it. They created a digital camera – a direct mimic of the actual camera – and pulled a quick key of the green screen using that simple model so that Tarsem and Raymond and I were able to see the overall layout. So while they were shooting, we were visualizing what it might look like, and we had those scene files to start with.

In addition, the three sets were all LIDAR-scanned. That gave us a massive, very heavy Maya scene as a point cloud. We took those three sets in and stripped them down to a minimum so we could build and project onto them using the basic cliff model as the underlying structure. We made a new, higher-res but undetailed, version and projected geometric shapes onto it as reference material. Next, we took the cliff and the stripped-down LIDAR sets and fit all the pieces together, putting the checkpoint, the tree bluff, and the village into the model. That gave us a solid foundation for our build, and it also allowed us to do quick renders as we started to get plates from production.

Mouseover film still above to compare to original live-action plate

SD: Were you just adding environments, or were you doing some touch-up and adding elements to the live-action scenes, as well?

SH: The biggest problem is the nature of the rock surfaces themselves. In a couple of these shots, a third of the frame might be a rock surface. As you get farther into it, things get moved around and you try out different surfaces until you get a very good build. But to get away from the feeling that your foreground was shot in a studio, you need to augment what was in the set. So we didn’t really do builds, but we augmented with matte painting and projecting [in the live-action sets] to marry them into what we were building for the environment.

SD: How did your workflow progress during production?

SH: We didn’t have complete visibility on what our footage was going to be from day one. To cover our backs, we took the CG build really far. We brought the design and shapes into Zbrush, where Gustavo Yamin, our main CG artist for the cliffs, meticulously sculpted rock-surface detail into the cliff. He would start off with rigid shapes, blocks and cubes, and break that into manageable chunks and carve in the detail. We focused that work around the main set. That way, if we had to do something drastic with the camera, like flying across the sets or moving in really close, we would have a believable rock surface instead of just a projection. As we went through the show, we had matte painters working in unison, doing broad strokes and painting up what the overall look could be.

There was a crossover point where we said that’s enough for Zbrush sculpting and focused on matte painting. It’s hard to nail the look from day one. Between us and the other VFX facilities and Raymond, we were all trying to work out what Tarsem wanted. A lot of that was happening late in the day, so it became more about matte painting, which allowed us to move fast when Tarsem said, “Let’s change this area.” You needed to move away from sculpting by that point, because that’s too meticulous and too long a process.

Mouseover film still above to compare to original live-action plate

SD: So you had to pick and choose where all that visible detail was necessary as opposed to speed and flexibility.

SH: Yes. The village itself was built out of 3D assets, but there was a cut-off. We extended it up to 25 stories. We blocked it out with basic geometry – cubes and geometrical shapes that extended directly up from the original village. We took bars, windows, curtains, and lanterns from the photographed village and created a whole collection of set dressing that could be used in a general way. And then we started putting in windows, moving elements around, and just getting a feel for it. We followed the brief, but as we got further into the show and pulled the camera back it was quite hard to read that there were 25 stories in all that detail. So we did a cutoff for the CG build. Based on where the camera would be, we decided we only needed to build up to the 7th or 8th floor at a higher resolution. After that point we did matte paintings again. We carved in different pathways, shifted buildings around quickly and easily, changed rooftops. A lot of creative decisions were being made to help the story, so we had to remain open to creative changes. It wasn’t bad, by any means. We just had to adjust quickly.

Mouseover film still above to compare to original live-action plate

SD: You created the arrows for the Epirus Bow recovered by Theseus. How was that accomplished?

SH: We built a CG arrow that went through various iterations, with a lot of back-and-forth between us and Raymond. At one point, we had a giant spinning mechanical head on it. We called it the Iron Maiden version – it was much more rock and roll. But the underlying arrow is a solid form, a metallic silver structure with a feathered tip and a chrome-like head. We sculpted a lot of nicks and detailing into the actual body of the arrow. When we rendered the arrow in the scene using 3Delight, that gave us a large selection of AOVs for the compositor to play with. We did previs it and try out different, much more fluid, treatments – things that looked like fire as we pulled back, or different extremes – but we went back to the original bow, which looked quite different from what’s in the film. It was a silver bow that had little diamonds on it. We wanted to have an arrow that starts to reveal with a vapor, and then starts to glisten. The longer you hold the arrow, the stronger its power gets. Using AOVs, and having that detailing carved into the arrow itself, the compositor could fine-tune the effect and get small, sharp, crisp details in the arrow. He could isolate the detailing, applying lens treatments like chromatic aberrations and glows. The depth passes allowed us to control the brightness, from the tail of the arrow to the head, and animate that. We had different controls on the glistening effect we created from the specular pass. Finally, we were able to apply overall lensing and track it to environments using a pref pass, which actually gives you numerical values for where you are in a 3D environment.

SD: What was the most challenging shot in the film?

SH: Really, it was two shots, and they are the most obvious ones. One is where we fly through the sets, following the four arrows that Theseus lets fly toward four soldiers. And then the other one is where we fly back through the sky and end up in Athena’s eye at the end. Those two shots show off the environment in full. They were where everything we had been building got used.

For the pullout shot, we had to go even further still. You don’t usually see above the cliff face, except matte paintings of mountains and the horizon. We had to create mountains, make little islands and rocks out in the ocean, and extend the ocean much farther than in previous shots. About 40 percent of the clouds in that shot were CG and the remainder were projected matte paintings. Originally we were going to go with a full CG cloud, but as we moved forward we realized more of a painterly approach was needed, and we again chose a cut-off point where a matte-painted approach worked versus a CG cloud. As we flew through the cloud, obviously, it needed to be CG. The final bit of that pullout shot is as you go through the eye of Athena. We had to create a digital version of her irises, with all the high-res detail you’d see, and create a chromatic and fluid appearance. As you go through the eye, it becomes more of a reflection of what she’s looking at down on the earth. We had to ask, how would this physically work? Are we seeing a reflection or is it a dissolve? It really becomes a happy marriage between dissolve and reflection.

The full environment was done without Zbrush detailing. We designed the terrain in Maya, creating a series of mountains that could be moved around easily, and gave that to the matte painter to paint on top of. That was set up as a projection, with matte-painted clouds way back to the horizon and a matte-painted sky.

SD: And what about the arrow shot?

SH: That was a very dynamic camera move. There was a lot of back and forth between us about how we should fly into the set, how close we should get to the arrows, and whether they should fly below us or above us. Another strange factor has us catch up with all four arrows at the same time. We had to find a way to make that more believable – the longer he holds them in the bow, the faster they come flying through the air. We created trails and tried to create air pockets to make the arrows look like they were disturbing the air around them. At the end of the day, things got simplified, but a shot like that takes a lot of time to work out. For those two shots, we needed to push everything, from the build to the matte paintings, farther than for all the previous shots, either because we saw more or got closer to it.

SD: Did the fact that the release was stereo 3D affect your work?

SH: Yes and no. There was a question at the start of the show about how we should manage that. We went in with our eyes open to the fact that it was going to be a stereo show. If we got a last-minute request to do everything in stereo, we would have been able to manage that request. A large amount of the show was filmed in stereo and a large amount wasn’t, so there was a conversion process applied and we had to keep in mind that these shots needed to be broken up into as many individual layers as we could supply. But our work was finaled and approved as a mono image. We did that knowing full well we could supply the individual layers to the conversion facility.

SD: Do you think the stereo effect could be better if you were delivering actual stereoscopic assets?

SH: It’s arguable. In the shots with big camera moves we did give them, effectively, 3D scenes. We gave them the cameras, so they could have managed it the same way. We might have come up with a slightly better result in some shots, but a lot of the camera moves don’t push the stereo effects to the limit.

SD: What was your collaboration with the director like?

SH: Our overall contact with Tarsem was very sparse. Raymond was our main point of contact, but we had quite a few reviews with him in the initial stages as well as in the later stages. He will give you broad strokes, but we found we were working with quite an open brief at the start. Creatively, we were having to pull it out of the bag. We’d ask, what has he done in previous movies? What are the overall consistencies, stylistically, that we think he would like? One big consistency we saw was how minimal things could be, how labored camera moves could be, and how long the shots could be. You really are creating paintings. It’s nice to be left to your own devices and try to come up with the goods – to get into the mindset of the show.

We were trying to speak to Raymond and find out what the other [VFX] facilities were doing. We had some crossover with Scanline VFX, only because we were adding arrows into their environments. We did some [work] with Rodeo FX, as well. But there wasn’t a lot of contact between us until late in the game, and the cool thing that seemed to happen is we were all going in the same direction – which was a huge relief, obviously. It was a good sign of how much Raymond was steering us all together. It was a time-consuming process, but it worked.

Did you enjoy this article? Sign up to receive the StudioDaily Fix eletter containing the latest stories, including news, videos, interviews, reviews and more.

Leave a Reply