How Weta Digital Bulked Up for King Kong

Award, and an Oscar nomination in the past three years at Weta Digital.

Now, he’s doing something really big: Peter Jackson’s King

Kong. We got him on the phone, a month from his deadline on

Kong at Weta’s Wellington, New Zealand facilities,

to talk about the new technology and techniques Weta devised to meet

Kong‘s many challenges.

Kong. Letteri drafted Ben Snow as co-VFX supervisor

early and, later, added two more top supes (see sidebar, below). "We

have about 20 to 25 percent more people," says Letteri. "System-wise,

we’ve about doubled our capacity in terms of render farm, disk space,

and everything since Rings." As on previous films,

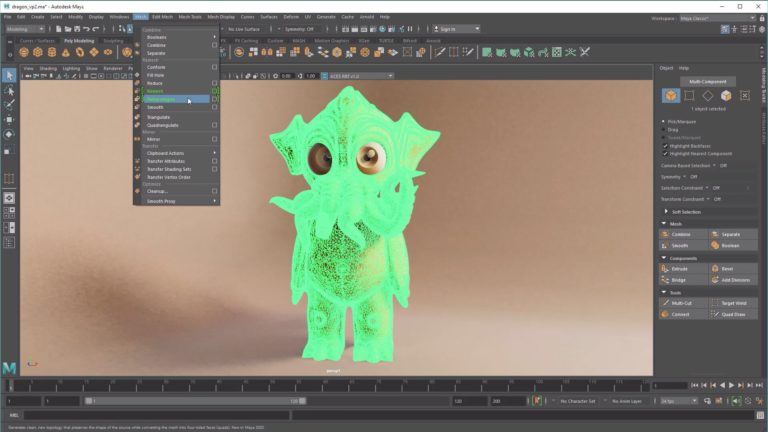

Weta built its pipeline around Alias’s Maya, Pixar’s RenderMan and

Apple’s Shake. Massive software, which had moved armies for

Rings, managed the digital people and vehicles that

populate Weta’s digital New York.

ability to work with 3D, so it’s good to help build environments and do

pre-comp," says Letteri. "And we still have Infernos here."

for Kong, they created an in-house DI department

based on a Discreet Lustre system.[Color Supervisor Peter Doyle worked

with Supervising Digital Colorist David Cole and Lead Colorists Billy

Wychgel and Melissa Hangleon.] "We also streamlined and improved

things in the back end – the hardware side, the networking and

infrastructure," says Letteri, "and we had one big philosophical change

in the pipeline: We are more geared toward pulling information together

at render time than carrying it through individual scenes."

low-resolution proxies rather than highly detailed geometry, whether

they’re juggling complex scenes or complex characters. The

high-resolution geometry rolls into RenderMan at render time.

have all the fur in it – and you obviously can’t open up a scene with

all of New York in it – so we built a system that uses proxies,"

explains Letteri, "Everything is actually generated at render time. The

system outputs whatever the camera is seeing whenever it needs it."

RIB archives to see low-res on screen and then output high-res at

render time. But rather than relying on RIB archives, Weta integrated a

more flexible custom system into its pipeline.

you start building bigger and more complex scenes, you still need fast

feedback and turnaround time, and this is the only way to manage it.

You can’t wait forever for things to update."

motion-capture tools. New fur software made the ape hairy, new

fluid-simulation software moved oceans, and new motion-capture tools

helped animators ape Kong.

there wasn’t all that much fur," says Letteri. "And, there are good fur

engines available – Maya’s got fur, and there’s also Shave and Haircut.

But, when it comes down to putting five million hairs on Kong and

making sure you can control what they’re all doing for every shot, you

need your own software. If something doesn’t look right, you have to

able to open it up and figure out what’s going on."

animate Kong’s five million hairs when he moves, manage collisions with

other objects, ruffle his hair in the wind and allow it to get dirty

and muddy.

Kong’s voyage to New York. The problems with commercial tools they had

used and tested whirled around interaction and scalability. Some fluid

solvers could manage an entire ocean or waves breaking around a boat,

for example, but not both at the same time.

complicated," says Letteri. "We needed something that would do the

broad solution and the small, specific solution where there is

interaction."

Modelers created Kong- and the digital doubles he interacts with- in

Maya with help from a new tool Weta calls Mudbox. "It acts more like a

painting system than a modeling system," says Letteri.

animation for the CG gorilla, the crew turned to techniques and the

actor who had created the Ring‘s Gollum: they

motion-captured Andy Serkis. Like Gollum, a gorilla has similar

musculature to a human, even though the body and face shape is

different. This time, though, the crew captured Serkis’s face and body

simultaneously and the animators used data from Serkis’s performance

for facial expressions as well. New software made it possible for

animators to drive the CG gorilla with the mocap data or with keyframe

animation, and a new system interpreted Serkis’s facial expressions

before mapping data onto the 3D model.

at the dots on Andy’s face, figures out what his expression says- that

he’s sad, for example- and then applies that to Kong. We’re using mocap

not just to move geometry around, but to actually interpret the actor’s

expression and apply that to the character’s expression."

Naomi Watts had to be real. To capture Watts and create her double, the

crew turned to Paul Debevec’s image-based Light Stage system at the ICT

Graphics Lab. "We’ve moved more into image-based lighting than in the

past for real actors and for real materials for set extensions," says

Letteri.

swamped right now, but it’s going well. The show is so big that people

could take on large sequences and run with them. It’s given the crew

good opportunities." And, perhaps, another Oscar nomination?

Snow (Star Wars: Episode II – Attack of the Clones,

Pearl Harbor) came aboard as co-visual effects

supervisor. And then, during the last few months, two more heavy-duty

VFX supes were brought in: George Murphy, VFX supervisor for

Constantine, The Matrix Revolutions and

Reloaded, who won an Oscar for Forrest

Gump, and Scott Anderson, who received Oscar noms for

Starship Troopers and Hollow Man,

and won for Babe.

Skull Island, and another on the voyage, so we broke it down roughly

that way," Letteri says. "Ben is supervising and shepherding many of

the big scenes on Skull Island and New York that we started early on.

Scott picked up the new New York shots, and George has a lot of the

coverage of Skull Island."

- 2005 Oscar Nomination for Best Achievement in Visual Effects for I, Robot

- 2004 Oscar for Best Visual Effects for The Lord of the Rings: The Return of the King

- 2004 Technical Achievement Award for groundbreaking implementations of

- practical methods for rendering skin and other translucent materials

- using subsurface scattering techniques

- 2004 Oscar for Best Visual Effects for The Lord of the Rings: The Two Towers

was CG supervisor/artist on The Abyss, Jurassic Park and Casper. He was

an associate visual effects supervisor on Mission Impossible and visual

effects supervisor on Magnolia. Universal’s Kong, scheduled for release

December 14, is his fourth film as visual effects supervisor at Weta,

following the final two Lord of the Rings movies and I, Robot.

Did you enjoy this article? Sign up to receive the StudioDaily Fix eletter containing the latest stories, including news, videos, interviews, reviews and more.

Leave a Reply