VFX and post-production technologies are in constant evolution. New formats are rapidly emerging, especially in VR and AR, and these mean more data to manage in post production. From flash to the cloud, new products offer new features and capabilities, but also new backend challenges for VFX IT.

To handle the growth challenges of HD, Ultra HD, and 3D digital media in post production, VFX IT teams commonly deploy scale-out architectures. While most scale-out systems make it easy to drop in storage capacity as needed, VFX IT pros should also consider how a system can help meet business needs. For example, performance levels may impact time to market, as artists waiting for a scene to load or render are not able to work on shots during those wait times. Keeping an eye on capital and operating costs ensures that keeping up with data growth doesn’t result in a similar boom in expenses.

Here are some features VFX companies should consider when investigating a scale-out storage solution:

- Single-pane-of-glass visibility into client and storage performance, as well as available node resources. This gives IT the information necessary to easily troubleshoot and tune the data center.

- Balance workload across nodes at the file level. Finely distributing workloads across nodes ensures that one node doesn’t get overloaded while another sits idle. This improves application performance.

- Support for multiple storage tiers. VFX data has different needs, and these needs change as data flows through the pipeline. The ideal storage architecture should support different storage tiers that can meet evolving data needs. For example, a digital compositor can greatly benefit from the performance of placing source footage and scratch files on client side NVMe flash; an editor requires access to larger amounts of data that can stream compressed from a server; and data for completed projects needs to be archived to cost-effective object/cloud storage that keeps data accessible in the event it is needed for future projects, like sequels.

- Automatically move files across nodes according to policy. VFX data is high capacity, which makes leaving data on performance nodes when it doesn’t require it an expensive proposition. The ideal VFX storage solution should be able to move data transparently across storage tiers as data needs change, without manual intervention from IT.

- Eliminate common architecture chokepoints. Ideally, VFX scale-out storage will accelerate work by enabling an application to access multiple files in parallel, keep management operations separate from data access to reduce resource contention, and minimize the back-and-forth that applications and storage require when working with files.

- Free from the cache crash. Caches are a Band-Aid for performance problems. They work great when data is in the cache, but when data isn’t in the cache, applications must wait for files to be pulled from nodes into the cache. It’s not uncommon for up to 70% of applications to end up using the same caching server. This imbalance increase cache misses, which makes caching performance improvements, and application response, unpredictable.

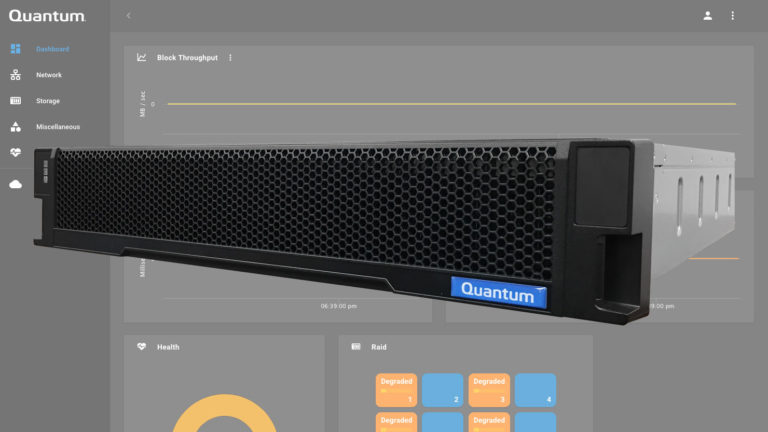

In addition to the above recommendations, try looking for a software-based solution. Rather than requiring you to buy yet another appliance, software-based solutions can give the flexibility to use storage hardware that meets any data requirements. Some software is vendor-agnostic, which further increases options for storage, as well as savings.

Did you enjoy this article? Sign up to receive the StudioDaily Fix eletter containing the latest stories, including news, videos, interviews, reviews and more.

Leave a Reply